Today's algorithmic composition tutorial looks at manipulating a tone row in Max and PureData to generate musical material. We'll also have a look at one technique that's useful in generating more fully formed compositions in Pd and Max than some of the musical sketches we've generated so far.

Jump to the end of the post to hear some sample algorithmic music output from this patch.

As with yesterday's OpenMusic tutorial we're using the tone row from Berg's Violin Concerto:

G, Bb, D, F#, A, C, E, G#, B, C#, Eb, F

You can use any tone row of your choosing. To start with we'll define our tone row in a table in PureData

and in Max

We'll start simply by playing through the tone row. As Max and PureData read and write to tables in slightly different ways the patches are setup a little differently in each. In Max the table object is used to read the table data:

In PureData the tabread object is used to read the table data:

So far our patches play through the tone row, but they're pretty boring as there is no rhythmic, pitch range or dynamic variation.

To start with, we'll add in some rhythmic variety. If you want the rhythms to fit a strict metre it's best to define the possible rhythms in a table. A previous algorithmic composition tutorial looks at working with rhythm in this way. We'll allow the tempo to be freer by not sticking to a strict metre and using random rest and note onsets.

Here we've added in a 50/50 chance of each metro count triggering a note of leaving a rest. If random outputs a 0 the select object matches this advancing the counter, triggering the note and choosing a new random metro time. If random outputs anything other than a 0 a new metro time will be chosen but a new note won't be triggered. You can change the probability of there being a note or rest by changing the value in the first random box. In PureData the patch now looks like this:

In Max the modifications are the same. With a random object giving us a 50/50 chance of a note or rest and further random objects being used to set a new metro setting each time.

Next we'll add in the possibility of playing through the tone row harmonically. Adding in a further random object that chooses how many of the next tone row pitches to play at a time, in this case from 1 to 4. This random object feeds into an uzi object in Max and an until object in PureData. We've also added a further random object that will choose a random transposition of the tone row each play through:

Although the last output of the counter outputs when the maximum counter value is reached, this would add our transposition before the last note is played, so a select 0 is used instead to trigger our transposition.

In PureData (the blue selected objects are the new ones to add):

We'll add in a little more musical interest by allowing the MIDI velocity to vary. In PureData add in a new subpatch called pd velocity to our main patch:

Then make the contents of the pd velocity subpatch look like this:

In Max add in a new patcher called p velocity to the main patch:

Then make the contents of the p velocity patcher look like this:

At the moment all of the pitches occur in the same octave. When many notes play at the same time this gives very close voicings. As each of the pitch classes from the tone row can occur in any octave, we'll add in a random octave amount to each note. Add this subpatch to your main patch in Puredata:

and make the p octave subpatch look like this:

In Max the setup is very similar, your main patch should look like this with an added p octave patcher:

And the p octave subpatch should look like this:

Now we have a patch that can generate music from a tone row and have control over a number of different musical parameters, we can change:

- the maximum number of notes that sound at a time by altering the random object that is input to uzi or until in PureData

- the min and max velocity by altering our velocity subpatch

- the probability of notes or rests occurring by changing the value of the first random object

- the octave distribution by altering our octave subpatch

This gives us a good range of musical control to allow us to develop the light and shade of a composition from say sparse high notes, to dense fast closely spaced chords. Rather than editing the patch as we go, we can get more control by using sends and receives.

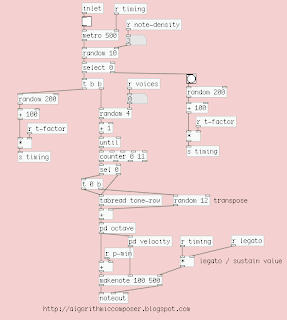

Here in PureData I've put the whole of out patch so far in a 'pd tone-row' subpatch and have used a metro object connected to a counter to keep time. This feeds into a select object as each specified time is reached a range of values are sent out to our patch. For example at 1 second the subpatch begins to play with 1 voice, the note density is set to 5 (high probability of rests over notes) and a small octave range is used. As each time interval is reached the parts generated become busier, until returning to a sparse melody to finish before the patch is turned off at 60 seconds:

In Max and Pd a semicolon in a message can be used as a send as above. You'll need to setup receives in your patch for this to work. So the pd tone-rows subpatch now looks like this:

And some receives also need to be added to your subpatches. The pd octave subpatch should look like this:

And the pd velocity subpatch should like this with a receive used to set the minimum and maximum MIDI velocity:

In Max the procedure is very similar with a metro outputting pulses every second, a counter outputting the passing seconds and a select triggering the various messages:

The patch we've worked on to generate the MIDI date has been put in it's own p tone-rows subpatch and now looks like this with the added receive objects:

Some receives also need to be added to your subpatches. The p octave subpatch should look like this:

And the p velocity subpatch should like this with a receive used to set the minimum and maximum MIDI velocity

You may have noticed that a few extra receives have been used in the Max and PureData patches:

r t-factor multiplies the metro value allowing tempo changes

r legato multiplies the note length allowing staccato and legato effects

r p-min sets the minimum MIDI Pitch allowing the composition to change pitch range over time.

You can hear some sample output from the patch here:

Using a master metronome and counter together with sent messages allows you to change the type of musical material your patch over time. This is a good way of developing your patches from musical sketches into more fully formed compositions and thinking about the meta-composition larger structures and flow.

If serialism isn't your thing similar approaches can be taken with more conventional harmonic material and metric structure.

After the getting the patch to work play around with your own settings and modifications and check back soon for another algorithmic composition tutorial!

skip to main |

skip to sidebar

Blog Archive

Followers

Popular Posts

Labels

- algorithmic composition (31)

- beginners (6)

- chaos (2)

- csound (1)

- interpolation (1)

- intro (3)

- markov chains (6)

- max (13)

- maxmsp (13)

- omalea (4)

- omchaos (1)

- open music (12)

- openmusic (12)

- pd (17)

- probabilities (5)

- puredata (16)

- rhythm (2)

- rhythm trees (3)

- software (3)

- sonification (1)

- timbre (1)

- tone rows (3)

- tutorial (19)

4 comments:

Your blog rocks, G! I'm a composition student working on my thesis, and this shit is rocking my world! Thanks! :)

Brilliant! My favorite patch so far.

Brilliant stuff!! Thanks for sharing all this!

I have spent some time looking around the internet for exactly this. Thank you so much!

Post a Comment